Performance issue in Dataiku.

Hi, I am new to Dataiku and creating one pipeline like, datbricks-read-only dataset to -> prepare recipe (databricks dataset) ->(sync) databricks dataset to ->(sync) Azure dataset and then further process . In prepare recipe I am taking only required columns and renaming it so no space should be there.

pipeline is like as shown in screenshot.

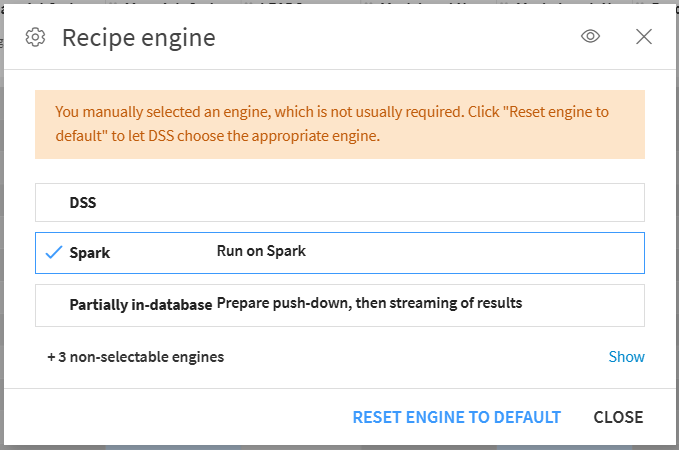

When I tried to add any new column in prepare recipe and run pipeline it took lots of time to process pipeline around 2+ hrs. I tried to use both DSS and Spark engine option present in prepare recipe.

I am processing around 29 million records.

Is there any way to improve speed?

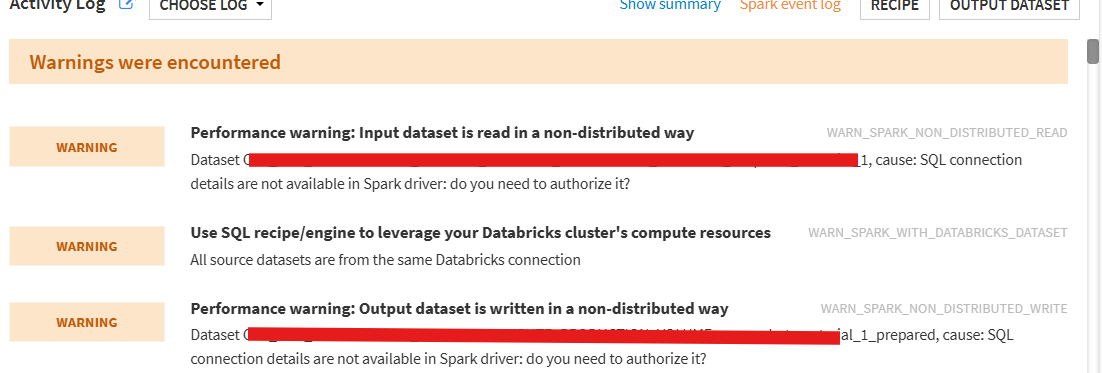

Getting some warnings like,

Operating system used: windows

Operating system used: windows

Operating system used: windows

Answers

-

-

Turribeach Dataiku DSS Core Designer, Neuron, Dataiku DSS Adv Designer, Registered, Neuron 2023, Circle Member Posts: 2,697 Neuron

Turribeach Dataiku DSS Core Designer, Neuron, Dataiku DSS Adv Designer, Registered, Neuron 2023, Circle Member Posts: 2,697 NeuronMoving 29m rows is never a good idea. It also makes little sense to move from Databricks to Azure since Databricks is a far more capable data technology. There are however few things you need to consider working with Dataiku. You should always try to use "push down" compute. With a SQL engine like Databricks this means the recipe should execute in the "SQL Engine". You can toggle the "Recipe Engine" view in the flow to see where you are not using SQL. Always try to use the same connection for your inputs and outputs as otherwise Dataiku will have to "stream" the data from the input connection to the output connection and this will be slower as it will run in the "DSS" engine. If your flow has a Databricks connection with read access to a schema (say schema1) with a Prepare recipe which writes to another Databricks connection with write access to another schema (say schema2) then you should speak with your Databricks administrator to to grant you read access on schema1 under the same credentials as the write access for schema2. This will allow you to perform transformations directly on the read only schema tables without having to sync the data first to the schema2. Finally avoid using Azure blob connections as these will be much slower and can't use push down compute. If the data needs to pushed to an Azure blob do it as the very last step.

Not all recipes support push down compute and same like the Prepare recipe will depend on which processors you use. Each Prepare step will show a green SQL icon when it is supported in SQL.