How can I store the Twitter stream without overloading my Dataiku server

Alex_Combessie

Alpha Tester, Dataiker Alumni Posts: 539 ✭✭✭✭✭✭✭✭✭

I have a Dataiku Twitter Stream dataset which collects around 1GB of data per week. Now I have around 10GB of data, which has overloaded my Dataiku server. I managed to clear some space, but I will soon hit the size limit again.

I also have a large data cluster connected to Dataiku server, but I can't store the Twitter stream directly into it. What would be a scalable solution to transfer data from the Twitter Stream dataset to my cluster automatically every day?

I also have a large data cluster connected to Dataiku server, but I can't store the Twitter stream directly into it. What would be a scalable solution to transfer data from the Twitter Stream dataset to my cluster automatically every day?

Answers

-

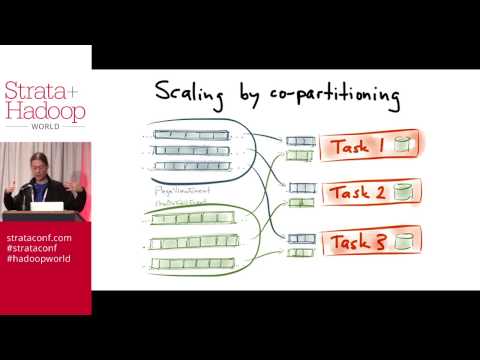

hi I suggest to look implemeting a kafka or samza solutions and then process the twitter using it, in this way you can store the msg in that layer and process withouth overloading your dataiku server....

https://www.youtube.com/watch?v=yO3SBU6vVKA

https://www.youtube.com/watch?v=yO3SBU6vVKA

hope it helps.

mario