Sign up to take part

Registered users can ask their own questions, contribute to discussions, and be part of the Community!

Registered users can ask their own questions, contribute to discussions, and be part of the Community!

Hello everyone

I currently use Dataiku version 6.0.4 and I would like to know if there is any type of element or configuration that tells me how many users log into the application in the day and if I can check the history of how many users logged into Dataiku DSS in the month.

Stay tuned

Thanks a lot

Lol @Clément_Stenac. Thanks for the pointer.

Yeah, so basically, @Carl7189 you can just create a managed folder pointing to DATA_DIR/run/audit log directory and create a dataset based on it. Then use prepare recipe to filter the data by /api/login calls and keep only API call and timestamp:

from this point, you can group/filter by time to get what you were looking for. It was easier than we all thought.

In DSS V8.0.4 (the latest version) at a project level, you have the Activity Summary, Contributors,

Contributors,

and Punch card (not shown here).

This feature looks to have been added in V3.0.0

During the same version, There was a "Global usage Summary" added.

However, I'm not finding a lot of documentation on this feature.

cc: @CoreyS

Hi @Carl7189

User login information stored in audit log files. The recent activity is shown on the Administration>Security>Audit Trail page but that's will not suit your request (per day/month count).

You will need to grep recursively the records in DATA_DIR/run/audit directory by "/api/login" and return only the username and timestamp but not sure how you can count the number per day/month. Technically, you can make this grep call from python recipe and use pandas dataframe to save this as a table. After this, you can filter the timestamps by the day or by month.

Hi Carl,

In addition to the project-level metrics, you can also parse your audit.log file to retrieve the total number of active users in DSS.

Here is some starter code for how you could do this in a Python recipe, pulling all active users from your available audit.log file data. This example writes the output to the new dataset active_users, that has an active_date and user column .

import dataiku

import pandas as pd, numpy as np

from dataiku import pandasutils as pdu

from datetime import datetime, timedelta

import json

import dataiku

f = open('../../../../../../run/audit/audit.log')

lines = f.readlines()

active_users_set = set() # date, user tuple

# once historical data is populated, a check can be run on on the timestamps to only process data for the previous day

yesterday = datetime.today() - timedelta(days=1)

yesterday_formatted = yesterday.strftime('%Y-%m-%d')

# each line of the audit log

for line in lines:

entry = json.loads(line)

# just pull the date field

audit_date = entry['timestamp'].split('T')[0]

# for daily runs, can include the check: `if audit_date == yesterday_formatted:`

if 'message' in entry and 'authUser' in entry['message']:

user_date = (audit_date, entry['message']['authUser'])

active_users_set.add(user_date)

array_of_entries = []

for entry in active_users_set:

array_of_entries.append(list(entry))

active_users_array = list(array_of_entries)

active_users_df = pd.DataFrame.from_records(active_users_array)

active_users_df.columns = ['active_date', 'user']

# append to dataset that stores all active users per day

au = dataiku.Dataset("active_users")

au.write_with_schema(active_users_df)

Keep in mind that your audit log may wrap more frequently than once a day, and that would need to be accounted for as well.

From here, you can aggregate the resultant dataset by day / week / month in order to get your aggregated user counts.

Thanks,

Sarina

In testing out the code segment. I got to the following line

f = open('../../../../../../run/audit/audit.log')And I get the following.

IOErrorTraceback (most recent call last) <ipython-input-3-dd8957f59dcb> in <module>() ----> 1 f = open('../../../../../../run/audit/audit.log') 2 lines = f.readlines() IOError: [Errno 2] No such file or directory: '../../../../../../run/audit/audit.log'

When I try.

f = open('../../../../run/audit/audit.log')on a Macintosh Installation, only 4 directories up, I end up getting something to work. That get me back to my DSS Home directory.

Is there a variable that can be gotten to in Python run under DSS that will better produce the home directory location?

Hey Tom,

The relative path will depend on how the python code is run. As you point out, you could create a global variable that points to your data directory full path like so:

{"DATA_DIR": "/path/to/dss"}

And then you can use the variable to set the path to your logging files:

import dataiku

client = dataiku.api_client()

vars = client.get_variables()

f = open(vars['DATA_DIR'] + '/run/audit/audit.log')

For the indices error that you are seeing, I would suggest adding a print statement in the for loop to verify the entry data. You may need to add another validity check before the user_date assignment.

Thanks,

Sarina

In a quick check, I could not find what was wrong with the data. It visually looked OK.

So, I don't know what additional check to put in place.

I'm going to let it go at least for tonight.

When running your demo code I'm running into a problem. That I don't understand.

# each line of the audit log

for line in lines:

entry = json.loads(line)

# just pull the date field

audit_date = entry['timestamp'].split('T')[0]

# for daily runs, can include the check: `if audit_date == yesterday_formatted:`

if 'message' in entry and 'authUser' in entry['message']:

user_date = (audit_date, entry['message']['authUser'])

active_users_set.add(user_date)

This iterates about 2300 times then I get the following error.

TypeErrorTraceback (most recent call last) <ipython-input-76-f01f734a6b86> in <module>() 6 # for daily runs, can include the check: `if audit_date == yesterday_formatted:` 7 if 'message' in entry and 'authUser' in entry['message']: ----> 8 user_date = (audit_date, entry['message']['authUser']) 9 active_users_set.add(user_date) TypeError: string indices must be integers

Something seems to be wrong with the data. A visual inspection of records at this point in the file does not show visually an obvious problem.

However, the problem seems to be with the statement

entry['message']['authUser']

When I pull this out of the user_data assignment making it user_data = (audit_date). Then I run to compilation. Hmmmm...

Thoughts?

Testing from Jupyter Notebook using DSS V8.0.4 on Macintosh. (The problems show up using either Python 2 or Python 3.

If only there was a software that could help you visually prepare and analyze data rather than having to write code for that 🙂

Lol @Clément_Stenac. Thanks for the pointer.

Yeah, so basically, @Carl7189 you can just create a managed folder pointing to DATA_DIR/run/audit log directory and create a dataset based on it. Then use prepare recipe to filter the data by /api/login calls and keep only API call and timestamp:

from this point, you can group/filter by time to get what you were looking for. It was easier than we all thought.

@sergeyd ,

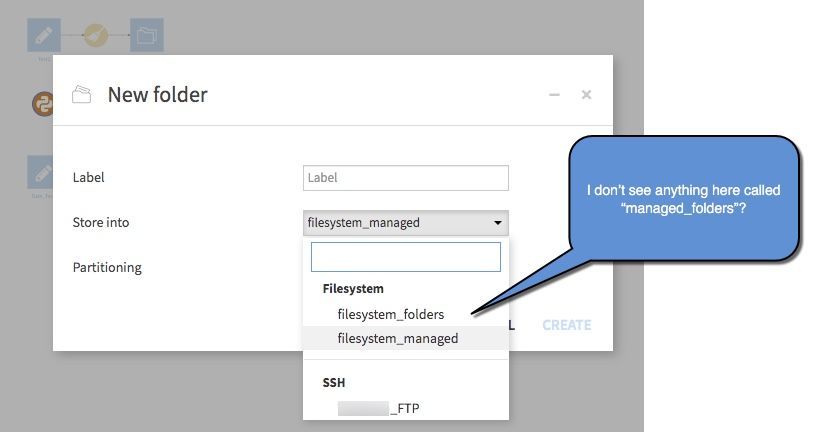

When thinking about operationalizing something like this. What is the best way to create this managed folder?

I found this link in the documentation to "Creating a managed folder".

In this documentation, there is a statement that says. "The default connection to create managed folders on is named "managed_folders". However when I look at setting up a managed folder, I'm not seeing a "managed_folders" option. (Or I'm looking in the wrong place.)

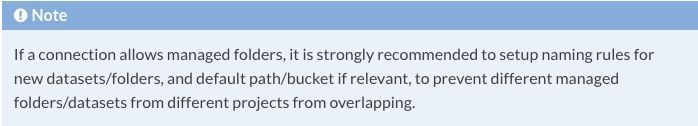

I also see this note that I do not completely understand.

Is this suggesting the creation of a managed connection for accessing files from multiple projects?

Thanks @sergeyd I understand that this would be a manual process and not automated, right?

As with all data operations in Dataiku DSS you could create a scenario to automate many aspects of processing the data. That information could then be presented as a dashboard for the consumption of insights.

This is very cool.

Is there any documentation for the meaning of the columns of these logs?

Just in case you need a step-by-step procedure:

1) Create a new FS connection (Administration->Connections):

2) Set the path to audit directory:

3) Create a new dataset based on this connection (make sure to read all the files):

4) Set the name and save it.

From this point, you can use prepare or any other recipes you want.

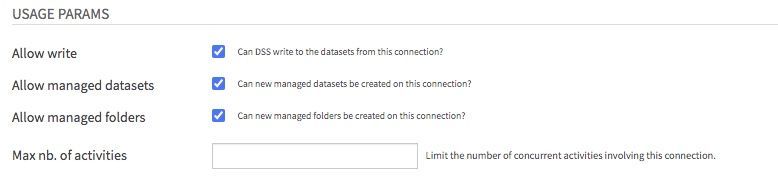

When it comes to the other setting on the connection. If one want to make this production ready.

I'm wondering about the setting for the USAGE PARAMS...

I'd think that I want to set "Allow write" to unchecked from the point of view of this connection. The same thing with "Allow managed datasets" and "Allow managed folders".

These are just text files. For safety do we need to set Max nb of activities to one. Or can multiple processes have the files open at the same time?

What do you think?

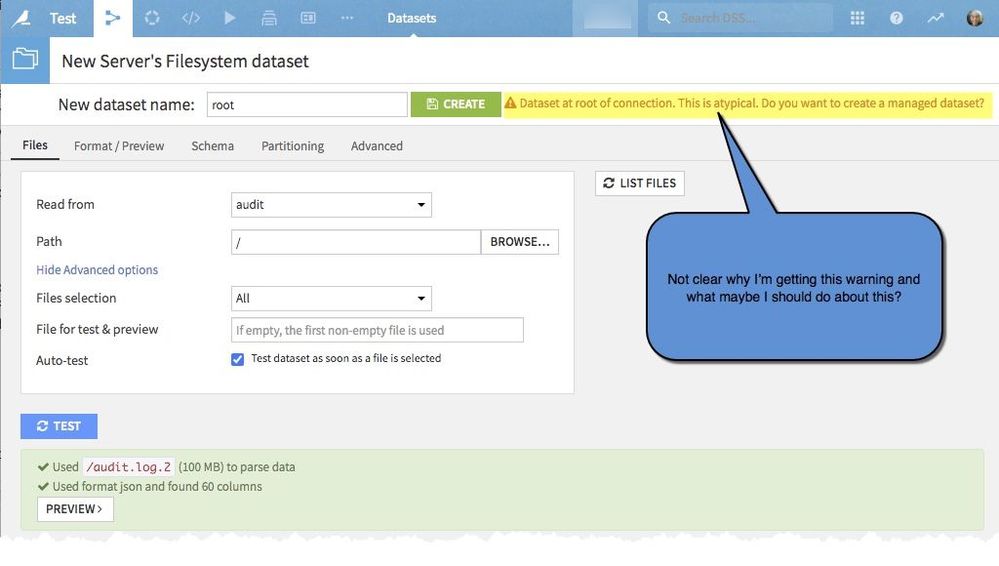

So, I'm still on this quest.

When using DSS 8.0.4, I'm getting some warnings when trying to create the dataset in a project using the audit connection. Here is what I'm seeing.

What is this warning about? Is this anything to be concerned about?

@tgb417 we have a check for the path while creating a connection. If it's just '/' we will show that message. This is just a precaution in case you really have the path set to '/' (root FS) meaning you can break your Linux if you write/delete something from it.

If you have this connection created from DATA_DIR/run/audit/, this '/' just means the root for audit directory (not "root" of the entire FS) so no worries here.

Hi everyone. @sergeyd, why to create a new connection, and not just create a new dataset from the Filesystem?

Since a few months I've been monitoring the resource usage by the users, and I did create a Filesystem dataset with this configuration:

(The path is valid only for my particular machine, where the DSS_DATA_DIR is at /data/dataiku/dss_data, of course)

This have been working without problem, so I wonder if you recommend creating a new connection because of some security concerns?

Thanks! Ignacio.

By the way, these are the parser settings on the format/preview section when creating the dataset from the audit files... just in case they are useful for others when parsing:

Sure, you can use the default root filesystem connection but assuming it gives access to entire FS you need to be sure that users know what they are doing instead of putting random stuff into the directories they shouldn't put. Of course, root-owned directories and files will remain untouched but I wouldn't go that route.