- Community

- »

- What's New

- »

6 Hidden Gems in Dataiku’s AutoML

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Automated Machine Learning, or AutoML, is a proven accelerator for model development and iteration. If you’ve taken training or used Dataiku before, you’ll know that it supports Supervised, Unsupervised, Deep Learning, and, more recently, Computer Vision use cases with the built-in AutoML.

In today’s article, we will explore some lesser-known but extremely powerful features in Dataiku's AutoML that can be grouped into two categories: Efficient Experimentation and Responsible AI.

Efficient Experimentation

No great model stems from a single experiment, which is why frequent iteration is critical to success. Dataiku supports you with a variety of hidden gems in the efficient experimentation category.

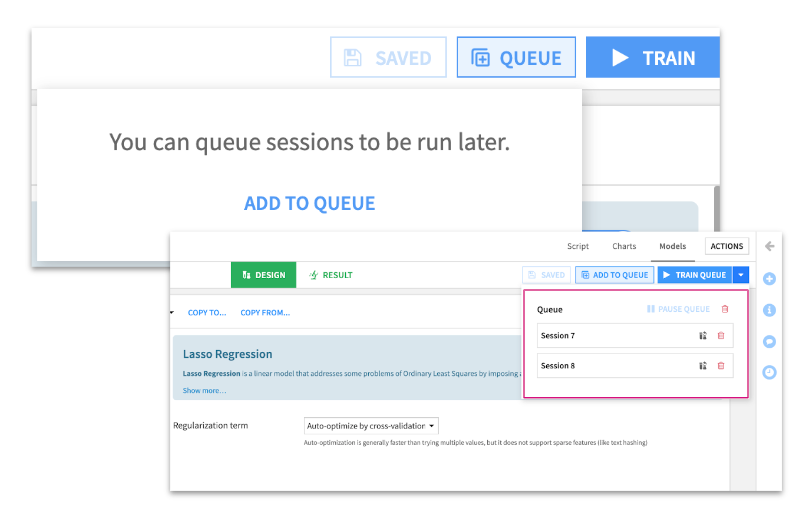

Model Training Queue

One of the most important yet underrated features in this space is the Model Training Queue. This allows you to set up several training sessions that will all execute without further intervention.

This is ideal if you only have a short time with the subject matter expert, have all of their contributions put towards different experiments, and schedule them to train one after the other in a queue. Alternatively, this allows you to schedule many experiments at the end of the day, which you can then return to the following day with all your models trained and ready to be reviewed.

For each of these training sessions the Results tab will auto-populate many assets to assist with explainability: partial dependence plots, subpopulation analysis, variable dependence, and performance measurement: scatter plots, error distribution, metrics, etc.

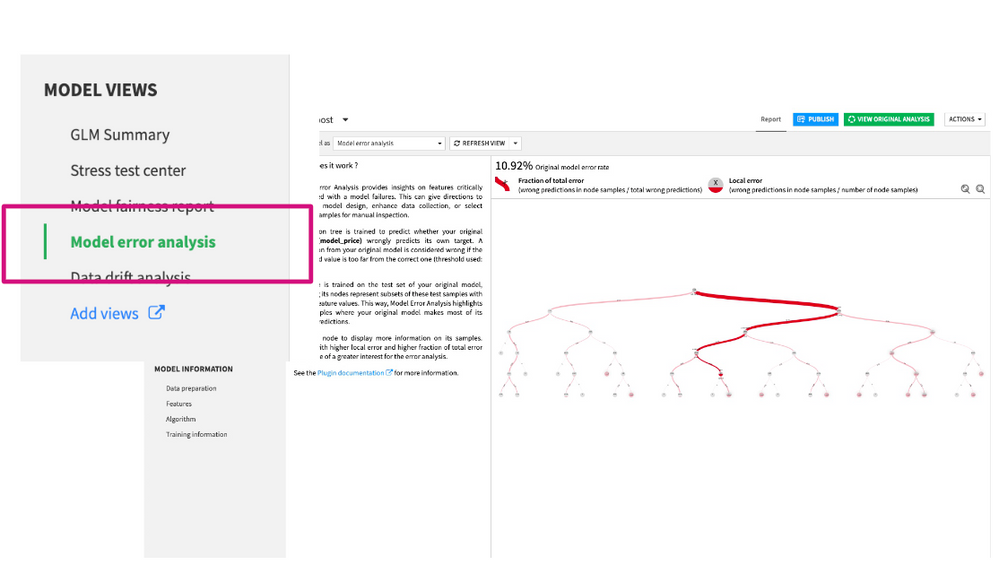

Model Error Analysis

Within the Results tab, you’ll also find Model Views, including the Model Error Analysis which allows you to develop a deeper understanding of your model. This Error Analysis is essential in the iterative process of model design and feature engineering. It is usually performed manually, but Dataiku provides a powerful, autogenerated view that digs into the issues. Easily highlight the most frequent types of errors as well as the features most often associated with the errors.

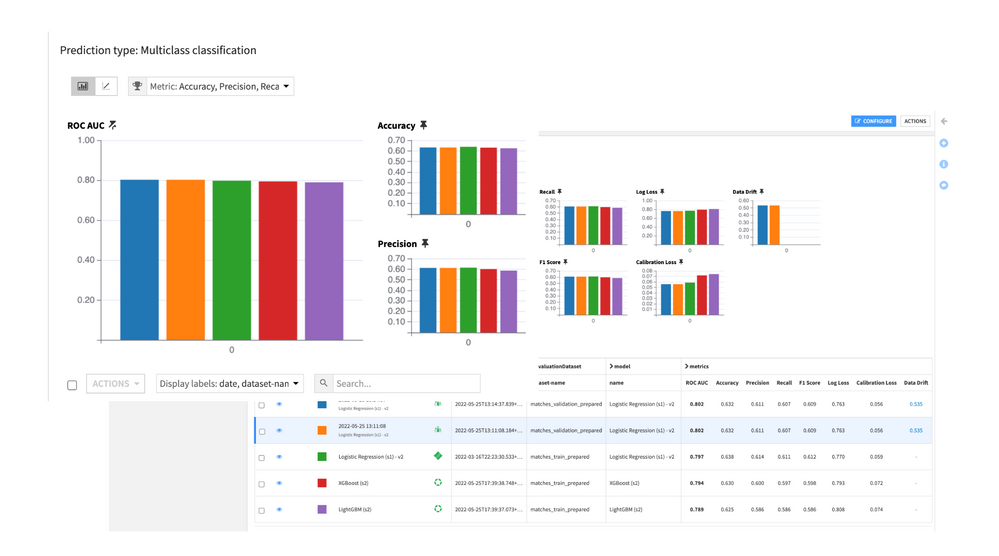

Model Comparison Functionality

After many training sessions, it is time to use the Model Comparison Functionality. A model comparison includes key information not only about the performance of the chosen models but also about training parameters and feature handling.

Many of the same kinds of performance visualizations found for any individual model in the Lab can now be compared side-by-side. For example, the chart below shows a variety of metrics for five candidate models. All of which makes it easier to choose a champion that you’d like to promote and eventually move into production.

Responsible AI

Each of the features that we’ve shown so far are impressive on their own, but they really shine together because they’re all part of a governed platform that enables your Responsible AI initiatives.

At Dataiku, we see Responsible AI as the process of building and testing methods to ensure AI is fair, transparent, and accountable. We make this easy through three hidden gems: ML Assertions, Model Fairness Reports, and the Model Document Generator.

ML Assertions

ML Assertions provide a way to streamline and accelerate the model evaluation process by automatically checking that predictions for specified subpopulations meet certain conditions.

With ML Assertions, you can programmatically compare the predictions you expect with the model’s output. You define expected predictions on segments of your test data, and Dataiku will check that the model’s predictions align with your judgment.

By defining assertions, you are checking that the model has picked up the most important patterns for your prediction task.

We’re not automating responsibility, we’re making it easier to interrogate your models and keep the human in the loop!

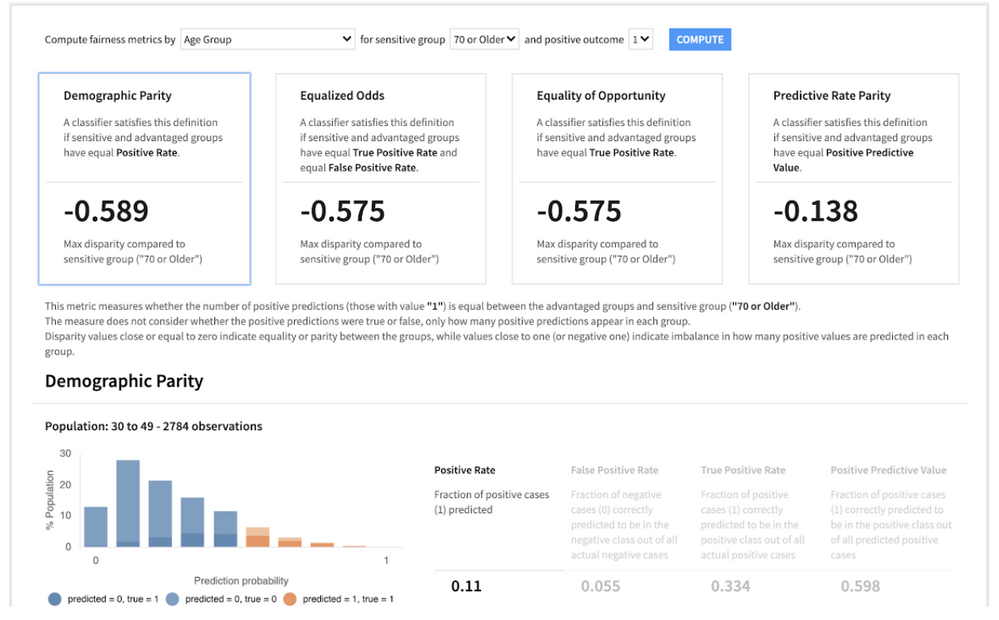

Model Fairness Reports

When generating a Model Fairness Report, four different metrics will be computed. For illustrative purposes, let’s suppose that we are in a loan assessment use case.

We’re providing a dashboard of key model fairness metrics so that AI builders and consumers are informed and can compare how models treat members of different groups. The different groups can be populations of interest such as age, race, and sex.

The four that we have included are considered some of the core fairness metrics in the literature.

For illustration purposes, we will use a loan assessment use case. As a result, the four metrics are:

- Demographic Parity: People across groups have the same chance of getting a loan.

- Equalized Odds: Among people who will not default, they have the same chance of getting the loan. Among people who will default, they have the same chance of being rejected.

- Equality of Opportunity: Among all people who will not default, they have the same chance of getting a loan.

- Predictive Rate Parity: Among all people who are given the loan, across groups there is the same portion of people who will not default (equal chance of success given acceptance).

You have the ability to extend this with custom metrics if you so desire which all allows you to better understand how the model impacts subpopulations of interest and take appropriate action from there.

Model Document Generator

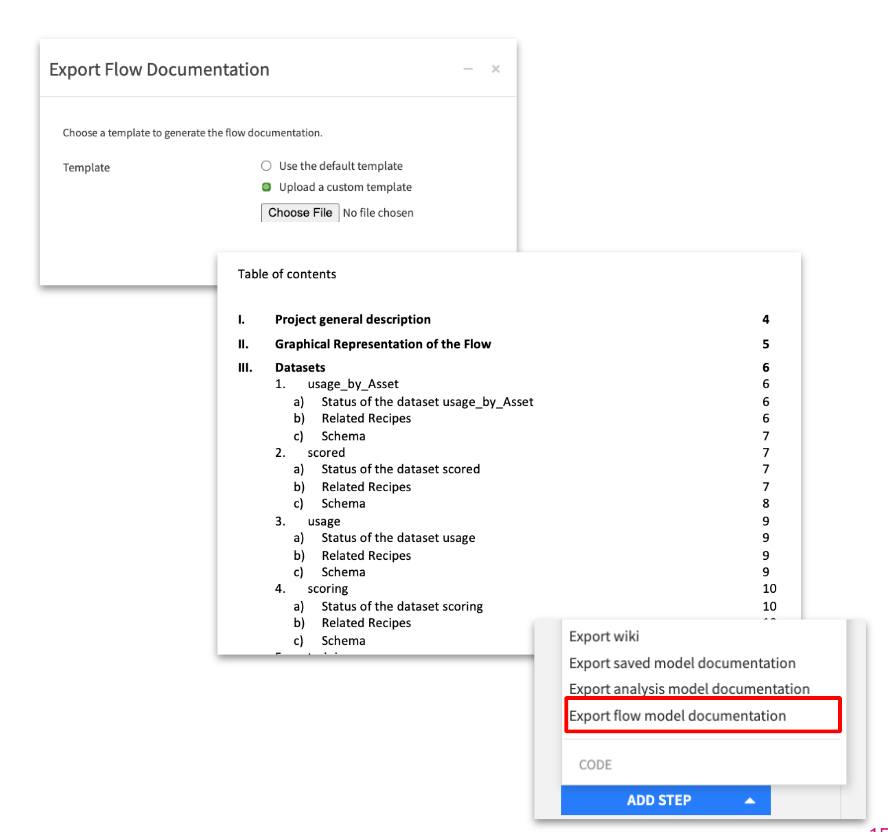

Finally, you can use the Model Document Generator to create documentation associated with any trained model. It will generate a Microsoft Word™ .docx file.

This documentation can be circled more widely throughout your organization, held onto for regulatory reasons, and allows you to prove that you followed industry best practices to build your model.

Go Further on the Dataiku Academy

Now that you’re able to efficiently experiment and validate the models you have built it is time to consider how to put them into production. Find the full course on MLOps on the Dataiku Academy:

And with that, you’ve learned about six hidden gems in Dataiku. Hopefully, you’ve been inspired by how to introduce these into your modeling process today or in the future. If you have any questions or comments, don’t hesitate to leave them below, and I’ll be happy to follow up with a response!

Only members of the Community can comment.

-

Academy

46 -

Announcements

104 -

Business Solutions

7 -

Community Release

33 -

Customer Stories

23 -

Dataiku Inspiration

3 -

Dataiku Neurons

8 -

New Features

28 -

Product

36 -

Top Contributors

45 -

User Highlights

3