- Community

- »

- What's New

- »

10 Holiday Treats in Dataiku 11.2: Discover Newly Delivered Product Enhancements

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The festive season is in full swing, and here at Dataiku, we’re counting down to the holidays, a cherished time for reflection, togetherness, and gratitude. But while I’ve been busy wrapping presents, my Dataiku engineering family has been hard at work preparing a different kind of gift — a bountiful array of new features and product enhancements for our customers, all delivered with Dataiku 11.2!

If you missed what’s new in last month’s product update, available October 21, 2022, check out this article: Brand New Features for Modelers, ML Engineers, and Analysts in Dataiku 11.1

For the curious, here are my 10 favorite treats from this update:

- Rename a dataset

- Export train/test sets and predicted test data from a Visual ML analysis

- Native Databricks JDBC connector

- Inclusion/exclusion operators in filters

- Image view with advanced filtering capabilities for computer vision datasets

- Multiple enhancements to time series statistical analysis and visual forecasting

- Language-specific logs for code recipe debugging

- Charting improvements

- Enable default timezone on SQL connections

- Public API for Workspaces, webapps, and more

Continue reading to dig into more details for each and, as always, check out the full details in the reference documentation and release notes.

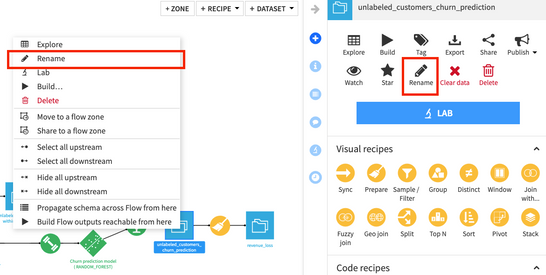

Rename a Dataset

Hurrah! You can now rename a dataset, either by selecting it in the Flow and using the right-click menu or by selecting Rename from the Actions menu in the right panel. Dataiku automatically scans the project for downstream and associated elements that will be affected by this change (e.g., notebooks, scenarios, recipes, variables) and lists the objects that will be updated so you can review the impact prior to confirming the change.

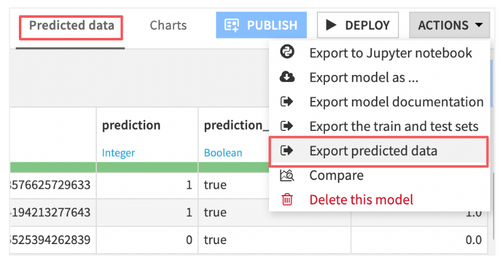

Export Train/Test Sets and Predicted Test Data From a Visual ML Analysis

Sometimes, you want to reproduce the exact results of an ML experiment performed in Dataiku’s Visual ML in another environment or do further analysis on the datasets used to train and test a model. To facilitate these activities, with Dataiku 11.2, you can export the input dataset with a row_origin flag that indicates which partition (train or test) a record was assigned to.

Furthermore, you can also export predicted data from the results panel of a Visual ML model to quickly compute custom performance metrics or visualizations on the first 50,000 rows of the test set. This saves you a few steps versus exporting the test set and applying a Score recipe to create a similar table.

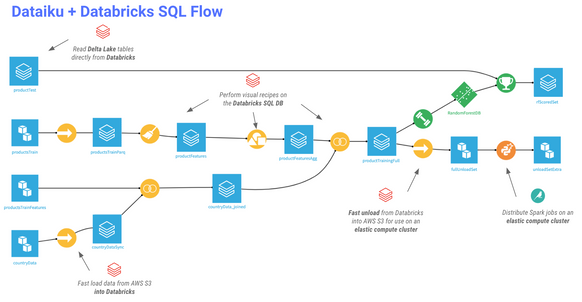

Native Databricks JDBC Connector

With the new update, you can leverage Databricks as a SQL database and push down visual and SQL recipe workloads to the Databricks engine for efficient, in-database computation. Fast path capabilities allow you to rapidly load and unload data between Databricks and other sources like S3 and ADLS, so you can easily access, explore, and analyze Delta Lake files and use them to build data products in Dataiku’s collaborative environment — with or without the use of code. Note that this connection does not utilize Databricks as a Spark engine; ML training and Spark workloads will continue to run on an EKS/AKS or Hadoop cluster.

Inclusion/Exclusion Operators in Filters

In all Dataiku visual recipes where you can define custom filters and build conditional logic, there are new operators for specifying valid or invalid values for the rule. Use these operators the same way you would IN and NOTIN operators in other languages to check whether or not an element is present (or not) in a list.

Image View With Advanced Filtering Capabilities for Computer Vision Datasets

For image labeling and computer vision datasets, a new image view is available to visualize the feed of images, along with corresponding annotations and/or predictions. This view also provides advanced filtering capabilities (e.g., display only images where a given object has been detected) and detailed metadata for each image.

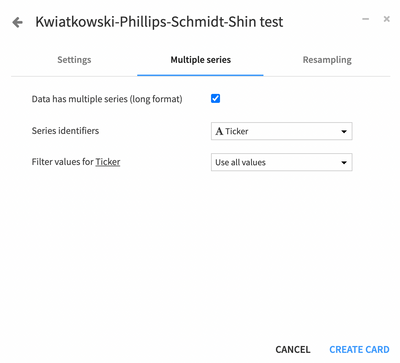

Time Series Statistical Analysis and Visual Forecasting Enhancements

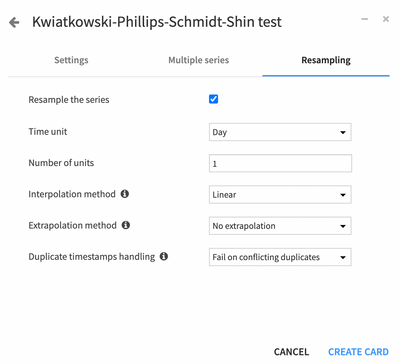

Dataiku 11.2 continues to shore up capabilities around exploratory data analysis and forecasting tasks for time series. For example, when building statistical tests on time series data, you can now specify a series identifier to run the analysis across multiple series, applying filters if desired.

If your time series is irregular or some series aren’t the same size, apply resampling techniques such as interpolation to infer numerical values for missing timestamps in the middle of the series, or extrapolation to infer timestamps at the beginning or end of the series.

If you’re using the visual forecasting task introduced with Dataiku 11.0, you’ll be happy to hear that hyperparameter search is now enabled by default for most algorithms. This approach, combined with the option for time-aware k-fold cross-validation, may mean training times run a bit longer, but generally leads to better performance metrics and model outcomes.

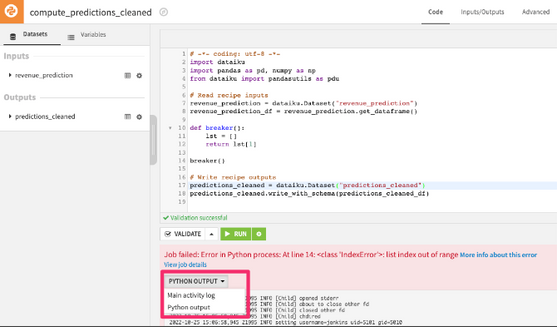

Language-specific Logs for Code Recipe Debugging

To help coders troubleshoot errors and failed jobs in Python, R, or Shell code recipes, they can select language-specific logs to help troubleshoot and debug their code without having to leave the page to go to Jobs > Activity logs. This convenient shortcut to job logs also is available for recipes utilizing containerized or distributed compute with Docker and Kubernetes.

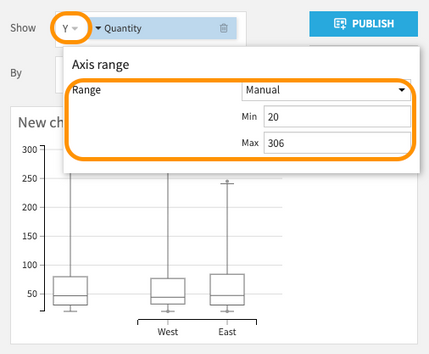

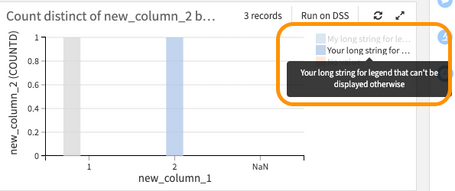

Charting Improvements

We made several interface improvements to reduce day-to-day pains related to charting in Dataiku. Ever had a long category label that gets truncated in a chart legend? The full text will now be displayed as a tooltip when you hover over the legend, even for long strings. Define custom Y axis ranges for box plots and enjoy a more intuitive interface for computing aggregations along multiple dimensions in chart objects like pivot tables.

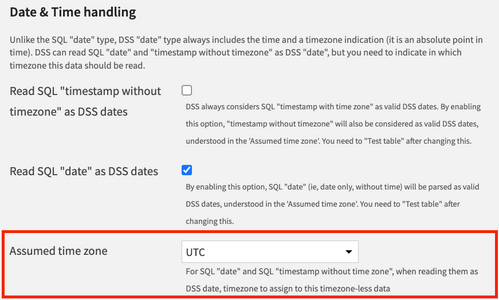

Enable Default Timezone on SQL Connections

When working with SQL date types that don't include time zones (i.e., "date" or "timestamp without time zone"), you may be visiting the dataset’s date & time handling settings to select an appropriate time zone value. To streamline this process, Dataiku instance admins can now set default assumed time zone values on a given connection so that this is pre-selected for you.

Public API Additions

As always, we recognize that not all users wish to utilize the visual interface to interact with Dataiku objects. This is why we provide REST and Python public APIs for nearly all tasks you can accomplish inside the visual interface. With this product update, new APIs are available for Dataiku Workspaces (update, delete, and other actions), webapp management, and more).

We Are Also Thankful for You This Holiday Season!

Several of these updates were influenced by customer requests and ideas surfaced through the Dataiku Community and technical support channels. We are grateful for your feedback that helps us to improve our platform!

Try It Out for Yourself

Dataiku 11.2 is available for download or upgrade today, and includes all of these latest features and capabilities developed with users like you in mind. What’s your favorite new feature in this product update? Let us know in the comments!

To get the full details about Dataiku 11.2, check out the full release notes below.

Only members of the Community can comment.

-

Academy

46 -

Announcements

104 -

Business Solutions

7 -

Community Release

33 -

Customer Stories

23 -

Dataiku Inspiration

3 -

Dataiku Neurons

8 -

New Features

28 -

Product

36 -

Top Contributors

45 -

User Highlights

3