- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Labeling > Support providing Annotations as optional Input

Hi,

I am using the new Labeling recipe as the name suggest to add labels to unlabelled images. In my use case, the images are quite big in terms of dimensions and each images requires around 10-20 annotations.

This means, the manual task of labeling a decent amount of images is quite high.

So far I have labelled around 70 images and used them to train my model. Next, I have applied this model to larger set of unlabelled images with great accuracy but also with more room for improvement.

To easily provide the model with many more training images, (highly accurate) outputs from the previous model runs (label information for detected objects and categories) could be reused as optional input for the labeling recipe working on training images.

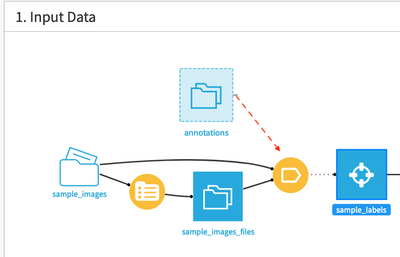

As a result, the labeling recipe would support:

- A folder of images + metadata (list contents recipe) as inputs as it currently does

- But additionally and optional, a dataset containing annotations for at least some of the images provided by the image folder. Those provided labels should be applied to the labeling annotate process, meaning that bounding boxes along with their categories will be drawn on the images. All the annotator has to do is to either accept those labels (if they are correct) or make minor modifications. But in total, a lot of time could be saved that way.